Monday, October 3, 2011

We've Moved...

Thursday, March 10, 2011

Outsourcing IT

Business knows that an IT department is important. It saves money in many ways, keeps the back-office running and helps in executing business processes. But in many organizations IT costs too much, with all its security, high availability, disaster recovery, compliance and support requirements. Business cringes seeing all the capital job proposals and budgets for IT spendings. This is why they are looking for an alternative. Say, an alternative, that gives the back-office support without having to worry about all the high-ticket items, like HA, DR and GRC. Items that IT seems to stick every year on the annual budget proposals. An this is exactly what the “cloud” tries to provide. The cloud is an abstracted business function, where all high-ticket IT items are spread over multiple clients and thus are cheaper to have for any particular client. The IT department, after all, is just a business paid expense, that has no real, intrinsic value all by itself.

The business, of course, wants the high level of service, the good “Service Level Agreement” to cover the needs of the business. This is where we enter the world of ITIL. The SLA’s the ITIL are a step in getting IT outsourced. An SLA’s without a extra value is a way to make IT separable, commoditizable. I am not saying they are bad. I am saying if you exceed at delivering the services on the SLA’s without bringing benefits to a business, you are no different than a third party outlet selling server time for a monthly fee.

So, before you dismiss the “cloud” business as yet another popular, but short lived word in the IT vernacular, think of the implications that this model has for the future of IT. There is a trend of businesses cutting back on the IT departments. I really see only one way for the IT department to survive this transition. IT can live on by becoming a cloud integration department. On the low level, someone needs to integrate in-house systems with the clouds during and after the transition to could based services. On the high level, someone needs to understand the business and to know how to map it to the services different clouds provide.

Granted, it may take a decade before the onslaught of the clouds, depending on how much push the business is doing toward cost-cutting, but start training up now for one of these roles, if you are working in an IT department.

PS. Yes, the cloud providers will need the IT skills to develop and maintain the cloud offerings, but the number of jobs will be much smaller compared to the in-house IT staff.

To see the original blog entry, please click here.

Alex Ivkin is a senior IT Security Architect with a focus in Identity and Access Management at Prolifics. Mr. Ivkin has worked with executive stakeholders in large and small organizations to help drive security initiatives. He has helped companies succeed in attaining regulatory compliance, improving business operations and securing enterprise infrastructure. Mr. Ivkin has achieved the highest levels of certification with several major Identity Management vendors and holds the CISSP designation. He is also a speaker at various conferences and an active member of several user communities.

Tuesday, March 8, 2011

Enterprise Single Sign-On Tug of War

- Desktop support team: Man, it replaces the Microsoft Gina. We need to provision it to all of the existing desktops, test it on our gold build, communicate with all the user population affected…It’ll take more than you think to implement it.

- Business: Ok, so let’s see how well you manage your assets. If you know them, can provision them and keep them homogeneous you should not have too many problems. If not, let’s work on the asset management first.

- Infrastructure: Users want to be automatically logged in to an enterprise app that is not covered by ESSO yet. Now we’ve got to develop another profile. This is not easy. The development, testing and support will take a lot of time.

- Business: Yes, it is the on-going cost of the ESSO. Either engage the vendors, get the training and do it in-house, or outsource it.

- Infrastructure: Now we have to have staff to support another server, another database and a bunch of desktops.

- Security: Hey, but no more sticky notes under keyboards with passwords.

- Help desk: We are getting more calls about desktop apps incompatible with the ESSO.

- Business: The incompatible apps will have to be worked through with the desktop support and the vendors.

- Security: We do not want to accept the responsibility for accidentally exposing all personal logins people may store in ESSO, like passwords for web-mail, Internet banking, shopping, forums, you name it.

- Consultant: Set ESSO up with a personal, per-user key encryption. The downside though is if a user changes their passwords and then forgets their response to a challenge question, they will loose their stored passwords.

- Help desk: Everybody is forgetting their responses to the challenge questions. People are unhappy about having to lose their stored passwords.

- Consultant: Set ESSO up with a global key, and let the Security department worry about an appropriate use policy and the privacy policy.

- Security: We do not want to send people their on-boarding passwords plain-text in an e-mail or print them out.

- Consultant: Integrate your ESSO with an identity management solution and have it automatically distribute passwords to people’s wallets.

- Infrastructure: All the setup, configuration and support takes so much time!

- Business and End Users: Hey, it is nice not to have to type enterprise passwords every time. Helpdesk is getting less calls about recovery of forgotten passwords. It saves so much time!

To see the original blog entry, please click here.

Alex Ivkin is a senior IT Security Architect with a focus in Identity and Access Management at Prolifics. Mr. Ivkin has worked with executive stakeholders in large and small organizations to help drive security initiatives. He has helped companies succeed in attaining regulatory compliance, improving business operations and securing enterprise infrastructure. Mr. Ivkin has achieved the highest levels of certification with several major Identity Management vendors and holds the CISSP designation. He is also a speaker at various conferences and an active member of several user communities.

Tuesday, February 22, 2011

The Not-So-Secret, Secret MQ Script

Now for those who have not installed WebSphere MQ on Linux and Unix systems, certain kernel parameters pertaining to semaphores and shared memory must be set above a certain minimal level. If these are not set, MQ may not operate correctly, which on a production system, only spells disaster. The WebSphere MQ Info Center has a “Quick Beginnings for Linux” section, which walks users through pre-installation tasks that need to be completed. Naturally, there is a section about setting the kernel parameters.

This section tells users to run the command “ipcs –l”, which displays the kernel parameters and their current settings, and provides an example of the minimal settings that MQ Server requires. The “ipcs –l” command will display the parameters in the format shown below:

One would think this format would allow an admin to check the parameter settings that MQ requires, make the changes, and move onto the install. The problem is that the Info Center page doesn’t provide this format. It provides the requirement like so:

Now examining these two formats for long enough, you can determine some of the possible correlations. But others, such as the kernel.sem setting, can be interpreted in many ways, as some of the values could be set for multiple parameters. Research provides more hints about the other settings, such as their short name, but no solid evidence for the kernel.sem parameter. There is, however, an IBM support page devoted purely to this little problem, but also doesn’t provide a concrete translation of the kernel.sem parameter. This page would probably be ignored by an amateur user, as the title states “Unix IPC resources” instead of ‘kernel parameters’ and ‘Linux’, but by looking back at the “Quick Beginnings” page, one notices the first sentence reads “System V IPC resources”. IBM hid our now not-so-secret script, mqconfig, on this page, as long as you don’t scroll right past it. The script reads kernel and software information about the system you are running it on, compares them to the IBM standards for MQ, and prints out if the system passes or fails each of the necessary parameters.

Once the failed settings have been changed, by copying the proper settings into the sysctl.conf file, and the script is run again, the output looks like this:

So for those of you other than AJ and myself who will be installing MQ on Linux or Unix, save yourself some time and a headache, and use this handy script. It can be found here: http://www-01.ibm.com/support/docview.wss?rs=171&context=SSFKSJ&dc=DB520&dc=DB560&uid=swg21271236&loc=en_US&cs=UTF-8&lang=en&rss=ct171websphere

Patrick Brady is a Consultant at Prolifics based out of New York City. He has 3 years of consulting experience based around the WebSphere family of products, focusing on the administration side of customer implementations. He specializes in High Availability solutions for WebSphere MQ and Message Broker.

Tuesday, September 7, 2010

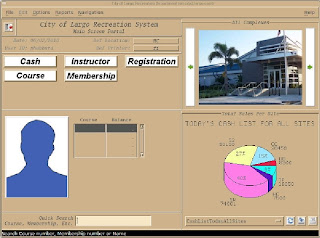

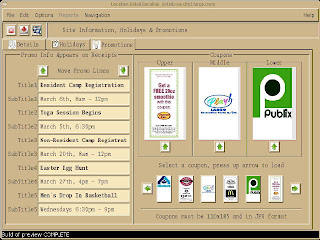

City of Largo, FL Wins With Panther Again

For the past 12 years, the city has utilized Prolifics’ legacy tools to build applications for their Police and Recreation Department, starting with JAM7 on SCO. With the release of Panther 5.0 for Linux several years ago, the city migrated most of their applications off their SCO platform. A reason for staying with Panther was its portability; i.e. ability to run on most platforms and to connect to heterogeneous databases with minimal changes.

In recent months, the city’s development team has been thrilled at enhancements made to the Panther Linux product, which include support for anti-aliased fonts, image and text support for tab cards and enhancements to tooltips.

The city’s next project is to web-enable the Recreation app, allowing citizens to register for classes being offered by the department.

According to their Developer/Administrator, “... I cannot over emphasize the effect that the upgraded Panther features have had with both the Recreation Department and City as a whole. We've been able to provide tools with a modern look and feel and in many cases, better than what we could buy at comparative costs.”

Sample screen shots from the Recreation Application

To see the customer's take on this solution, read Dave Richards' entry about Panther on the City of Largo Work Blog

Amrith Kaur-Maldonado, first joined Prolifics as a Consultant and then moved into the Prolifics Education Dept as a JAM/Panther trainer. She has experience in conducting WebSphere Developer Training at IBM training facilities. Amrith then transitioned back into the Technical Support Team 7 years ago and she now managers the Support Team.

TAM ESSO in the wild: A look at ISO-NE's implementation

I recently was involved in a unique implementation of IBM Tivoli Access Manager for Enterprise Single Sign On (TAM ESSO), working with ISO-NE, a major utility company, towards increased security and monitoring performance. Operating in a 24/7 environment, ISO machines must be highly available and employees monitor the health of the power grids in control room using a number of shared workstations. The company is required to track which users log into each workstation and must lock machines that were not being worked on. Doing so complies with Critical Infrastructure Protection (CIP) government standards and enables system notifications for user activity on any workstation. The new system integrates the existing building badges and unique user IDs making two- factor authentication possible.

As we were planning this project, we knew how significant time would be with ISO. Summer is considered a “high risk season” in this industry because of the increased utility usage during these months and the company’s infrastructure must be prepared to handle the activity. We realized the importance of preparing the company’s infrastructure as quickly and efficiently as possible. Prolifics focused this implementation around ISO’s specific security and monitoring desires, which puts them at an advantage with the badge reader and centralized auditing capabilities.

For those of you who are interested in hearing more, I will be speaking at Prolifics’ TAM ESSO live Webinar on September 15 to discuss the solution’s implementation and success. The webinar will include a demonstration showing how TAM ESSO can increase enterprise security, provide application access tracking, and increase your ROI.

Christopher Ehrsam is a Senior Security Consultant with Prolifics. Chris has been in the Identity Management field for over 10 years, getting his experience working with the Tivoli product family at IBM.

Wednesday, August 25, 2010

Integrating with Salesforce.com

Salesforce.com (aka Salesforce) is the most commonly used cloud-based Software as a Service platform for Customer Relationship Management (CRM). Recently, we have been involved with a lot of customers who are planning to start migrating to or have made a strategic decision to use Salesforce to manage their customer contacts, track sales orders, streamline their sales processes, etc. In fact, Prolifics itself is a Salesforce customer.

Our own experiences, and those with our customers who use Salesforce, have given us an in-depth understanding of what it takes to ensure that this CRM solution is made universally available within the enterprise – to business processes that require customer information or to portal applications that mash up customer information with data from other systems. This blog entry details three patterns that we have commonly used when integrating with Salesforce and that we believe can be reused – entirely at the design level and to a good extent at the implementation level.

1. Enterprise CRM Services – Every enterprise has defined standards when it comes to their enterprise services and data formats. The reusable enterprise services are exposed via the ESB and all the end applications use these enterprise services to communicate with end systems so that the ESB can provide the common functionality and the governance needed when performing system integration. Using this same concept when working with Salesforce allows enterprises to centralize the Salesforce integration at the ESB layer (the ESB communicates with Salesforce using SOAP/HTTP web services), do transformation, security, etc. at one common place and provide clients – processes, portals or mashups, etc. – with the consistent enterprise-wide data representation to which they are accustomed. The enterprise services provide the generic CRM interface to the clients, so that if the CRM system has to be changed, the hundreds of clients that use the CRM system in the enterprise do not have to change. The IBM WebSphere Enterprise Service Bus is commonly used for implementing this pattern.

2. Publish CRM Data – Another common requirement is to ensure that CRM data is passed from Salesforce to back end systems based upon changes that happen within Salesforce to this information. The ESB provides a service (SOAP/HTTP web service) that gets invoked by Salesforce (with all the relevant information from Salesforce) when data of interest changes in Salesforce. This data is then transformed and passed to the back end systems. The benefits of this approach include a centralized service definition on the ESB, transformation of data, centralized security management, supporting legacy applications that are not web service enabled [but still need CRM data], etc. The IBM WebSphere Enterprise Service Bus or IBM WebSphere Message Broker is commonly used for implementing this pattern. [Note: the reverse flow from end systems to Salesforce is handled similarly, instead of being invoked by Salesforce; the ESB invokes a service on Salesforce.]

3. Bulk Load of CRM data – It is very typical for customers to need the ability to bulk load customer data from their existing homegrown systems into Salesforce. These kinds of requirements are also common when mergers and acquisitions happen and new customer data needs to be loaded. During the bulk load of CRM data, there may also be a need to cleanse the information before loading it into Salesforce. The IBM InfoSphere DataStage, IBM InfoSphere QualityStage, and the IBM InfoSphere Information Server Pack for Salesforce.com together support this pattern that involves the definition of ETL jobs that extract data from different sources, cleanse, map the data to Salesforce format, and load it into Salesforce.

I want to conclude by saying that we at Prolifics believe in “eating our own dog food.” Prolifics has a production implementation of IBM WebSphere Enterprise Service Bus that was built using the pattern described above (Publish CRM Data) that enables the data we have in our Salesforce instance to be published to IBM’s Global Partner Portal based on Siebel.

In my next set of blog entries, I will focus on couple of related topics:

- IBM’s acquisition of Cast Iron Systems and how the solution supports these patterns of integrating with Salesforce

- A set of assets that we are currently working on at Prolifics to help customers jump start their own Salesforce integration initiatives.

Rajiv Ramachandran first joined Prolifics as a Consultant, and is currently the Practice Director for Enterprise Integration. He has 11 years experience in the IT field — 3 of those years at IBM working as a developer at its Object Technology Group and its Component Technology Competency Center in Bangalore. He was then an Architect implementing IBM WebSphere Solutions at Fireman’s Fund Insurance. Currently, he specializes in SOA and IBM’s SOA-related technologies and products. An author at the IBM developerWorks community, Rajiv has been a presenter at IMPACT and IBM's WebSphere Services Technical Conference.